Helm for the Second Time – Versioning and Rollbacks for Your Application

We describe how to perform an update and rollback in Helm, how to flexibly overwrite values, and discover what templates are and how they work.

Author:

Author:In this article, we will try to familiarize you with the topic of virtualization, containerization, and Docker. What is this software and why do we use it? What are the differences between virtual machines and Docker? All this and more in this artile, we encourage you to read on.

Basic concepts

To understand what virtualization is and how Docker software works, it is necessary to understand the basic terms:

Thanks to containers, we can run our software on any system on which Docker is installed.

Purpose behind using Docker

If you have any experience working as a programmer in a team, you have probably experienced technical problems related to software development. Docker won’t help us with all the coding difficulties, but it can eliminate the most frustrating obstacles, such as:

By enclosing the code in a container, we provide a certain standard of software in which the code is run, and we can be sure that it will always work.

Simply put, Docker standardizes the way we run applications and ensures that if the application runs on one environment, it will run the same on another – provided, of course, that Docker software is installed there.

Virtual Machines vs Containerization

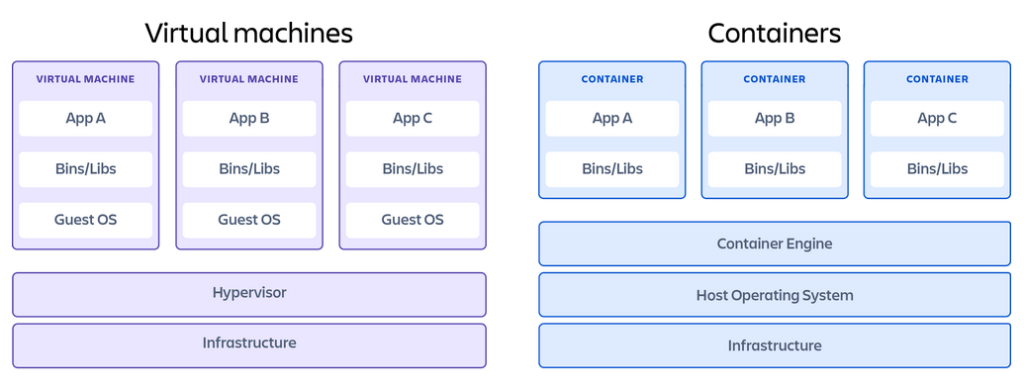

Virtualization is the simulation of the existence of logical resources through software that uses physical resources. Virtual machines can run an operating system inside another system. The host system then assigns certain resources to such a virtual machine. The key difference between containers and virtual machines is that the latter virtualize the entire machine including the operating system, sometimes referred to as the Guest OS. Containers virtualize only the software layers above the operating system level and are often used in microservices architecture, for example.

Picture 1: Comparison of virtual machines and containers. Source: Atlassian.

Docker installation

The installation of the software depends on the operating system, that’s why we have decided to present the most popular option for Windows. You should:

After the installation, you should be able to execute the “docker” command in any chosen terminal (PowerShell, cmd).

Images vs containers

Beginners in containerization often confuse these two terms at the beginning, and their understanding is crucial for effective work with Docker:

An image is therefore a file from which we produce instances of our applications. It’s a bit like a class in programming and an object that is an instance of that class. If you’re not a programmer, you can think of an image as a recipe and a container as a finished dish (you can make the same dish multiple times from one recipe).

Dockerfile

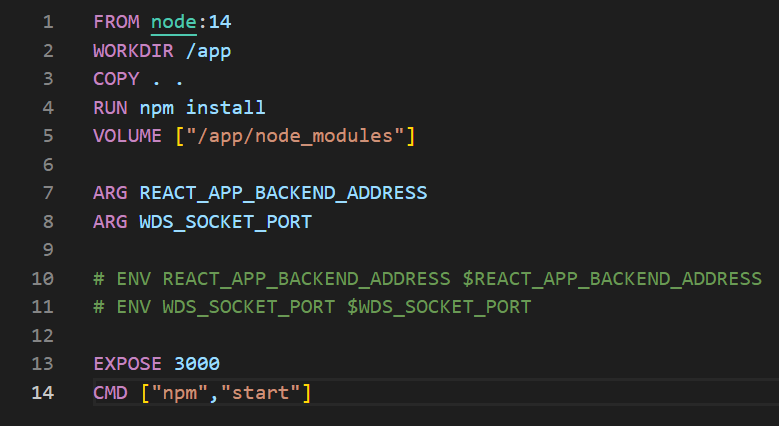

A Dockerfile is a file that contains configuration information used to generate an image. In other words, we define from which interpreter and libraries our running application should use.

Picture 2: Example Dockerfile for running a React.js application.

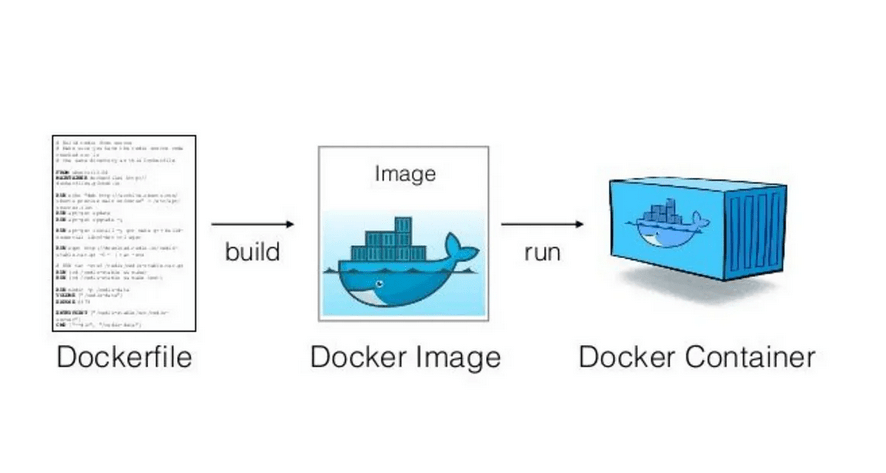

From the defined Dockerfile, an image can be generated, and from the image, a container can be run. If you feel lost due to new concepts, you can refer to the following image from medium.com.

Picture 3: Workflow that accompanies the creation of a container. Source: medium.com.

Summary

We hope that we have presented the reasons why it is worth learning Docker and how it can help in daily work. In the next article, we will try to dive into how this software works and teach you the basics of handling it.

Source:

https://pl.wikipedia.org/wiki/Wirtualizacja

https://www.atlassian.com/pl/microservices/cloud-computing/containers-vs-vms

https://www.ibm.com/cloud/blog/containers-vs-vms

Helm for the Second Time – Versioning and Rollbacks for Your Application

We describe how to perform an update and rollback in Helm, how to flexibly overwrite values, and discover what templates are and how they work.

AdministrationInnovation

Helm – How to Simplify Kubernetes Management?

It's worth knowing! What is Helm, how to use it, and how does it make using a Kubernetes cluster easier?

AdministrationInnovation

INNOKREA at Greentech Festival 2025® – how we won the green heart of Berlin

What does the future hold for green technologies, and how does our platform fit into the concept of recommerce? We report on our participation in the Greentech Festival in Berlin – see what we brought back from this inspiring event!

EventsGreen IT